Reducing Wasted Paid Search Spend with Query-Level Behavior Analysis

Overview

Standard paid search optimization focuses on what happens before the click — CTR, CPC, query relevance. But clicks don’t equal qualified traffic. I developed a methodology that adds a missing layer: evaluating traffic quality based on what users actually do after they land on the site.

The result is a scalable, repeatable framework for identifying and eliminating wasted spend that traditional methods consistently miss. I have applied this approach across healthcare, automotive, and higher education, and the same patterns emerge every time.

The Problem

Paid search teams typically manage negative keywords through manual search term reviews and predefined exclusion lists. This approach has two fundamental gaps: it relies on what teams already expect to find, and it has no connection to post-click behavior.

The result is campaigns that continue spending against traffic with no realistic path to conversion — traffic that looks plausible at the query level but bounces immediately on arrival.

Why Existing Methods Fall Short

Traditional workflows focus on manual review of search terms, predefined exclusion lists, and surface-level query relevance. This creates two key gaps: no connection to post-click behavior such as bounce rate and engagement, and no scalable way to identify unexpected or ambiguous intent. The result is optimization based on clicks, not traffic quality.

The Approach

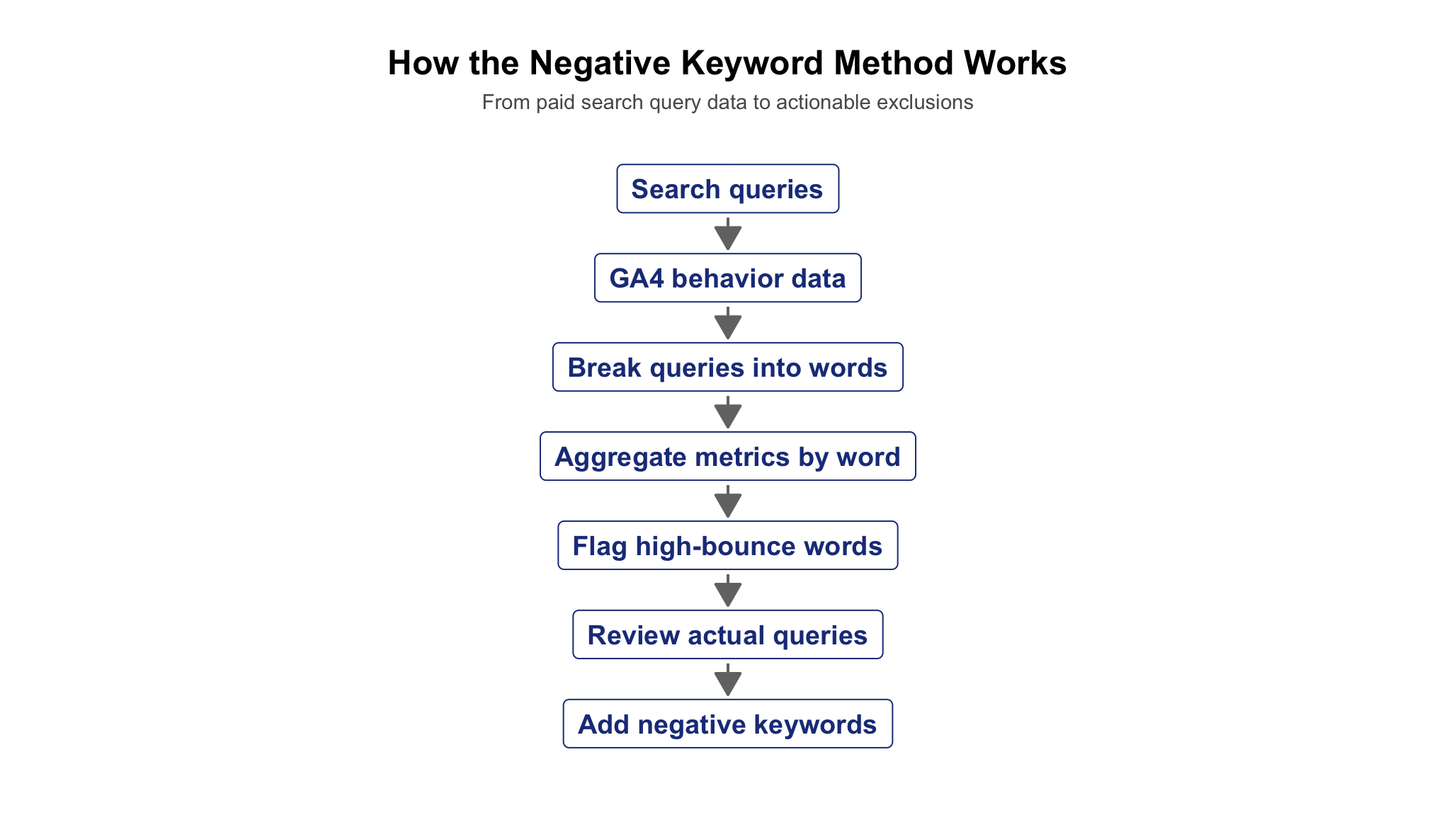

I developed a method to connect search query data with on-site engagement metrics. The analysis required building a custom dataset joining Google Ads query data with GA4 session-level engagement metrics — infrastructure that does not exist out of the box. Queries were broken into individual words and performance was aggregated at the word level. Common stopwords were removed to ensure analysis focused on meaningful, intent-bearing terms. Multi-word clinical and domain-specific terms were preserved as single tokens to prevent meaningful phrases from being split and misclassified. Sessions, bounce rate, and cost were calculated per word. A reusable, automated tool was built to flag high-bounce words and surface associated queries for review. The tool was designed to run across multiple accounts and properties simultaneously, making it scalable across an entire client portfolio.

The key innovation is analyzing at the word level, not the query level. Individual words that signal mismatched intent are often invisible when buried inside longer queries — but they surface clearly when aggregated across thousands of sessions.

This tool was built from scratch and has been deployed across multiple organizations spanning healthcare, automotive, and higher education.

Key Insight

The most impactful negative keywords are not obvious ones.

Queries that appear relevant at a glance are often tied to entirely different user intent. These would never be caught through standard search term reviews because nothing about them looks wrong — until you look at what users do after the click. In multiple cases, campaigns were spending against traffic that had no realistic path to conversion.

Examples Across Industries

The following examples illustrate the types of patterns this methodology consistently surfaces across healthcare and automotive — different industries, same underlying problem.

Healthcare — Condition Name Misinterpretation

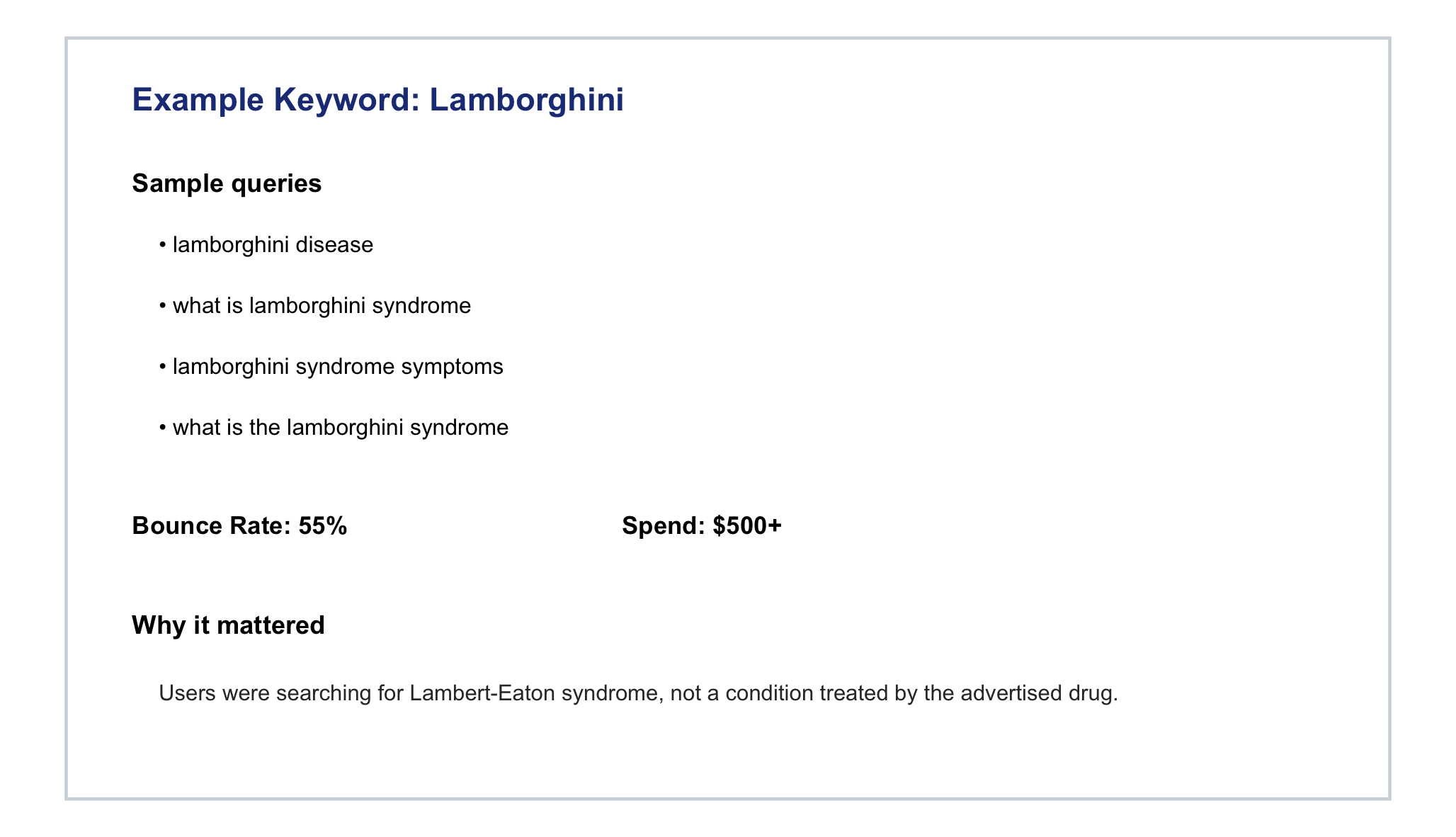

Keyword: Lamborghini

Queries: “lamborghini disease,” “what is lamborghini syndrome”

Users were searching for Lambert-Eaton syndrome, a condition unrelated to the advertised drug. The word “Lamborghini” flagged immediately at the word level due to elevated bounce rate and cost. This pattern would never have been found through manual search term review.

Impact: ~$10K+ identified in just a few months from this single word — and that figure would have continued to grow undetected. Added as a negative keyword, eliminating the spend going forward.

Healthcare — Abbreviation Ambiguity

Keyword: Magnesium

Queries: Users searching for magnesium supplements, triggered because the drug being advertised treats MG (Myasthenia Gravis). The abbreviation that correctly described the condition also matched a completely unrelated supplement search. The campaign was doing everything right — and still attracting the wrong audience because of a single ambiguous abbreviation.

Impact: ~$3K in wasted spend identified.

Healthcare — Competitor Treatment

Keyword: Vyepti

Queries: Traffic related to a migraine treatment, not the advertised condition. Elevated bounce rates revealed competitor-intent traffic that query-level review had missed entirely.

Impact: ~$8K+ in spend with no conversion potential identified.

Automotive — Peer-to-Peer Intent

Keyword: Craigslist

Queries: Users searching for peer-to-peer vehicle listings, not dealership inventory. Bounce rate: ~97%. This traffic had zero purchase intent by definition.

Impact: ~$1K identified in a single month.

Automotive — Portal Confusion

Keyword: Portal

Queries: Users attempting to access payment or employee systems. Removed a consistent source of non-buying traffic at scale across multiple properties.

Patterns Observed Across Industries

Across every industry and organization where this methodology has been applied, the same categories of mismatched intent appear: abbreviation ambiguity where one term means different things to different audiences; condition or product confusion where similar names carry entirely different intent; wrong-funnel traffic where users are at a different stage or looking for something adjacent; and zero-intent traffic where users could never convert regardless of ad quality.

These patterns are not edge cases. They are systemic. Every large paid search account has them. The question is whether you have a method to find them.

Impact

This methodology has been applied across tens of thousands of queries spanning multiple industries and organizations. Each implementation identified patterns that had been invisible to the existing optimization process.

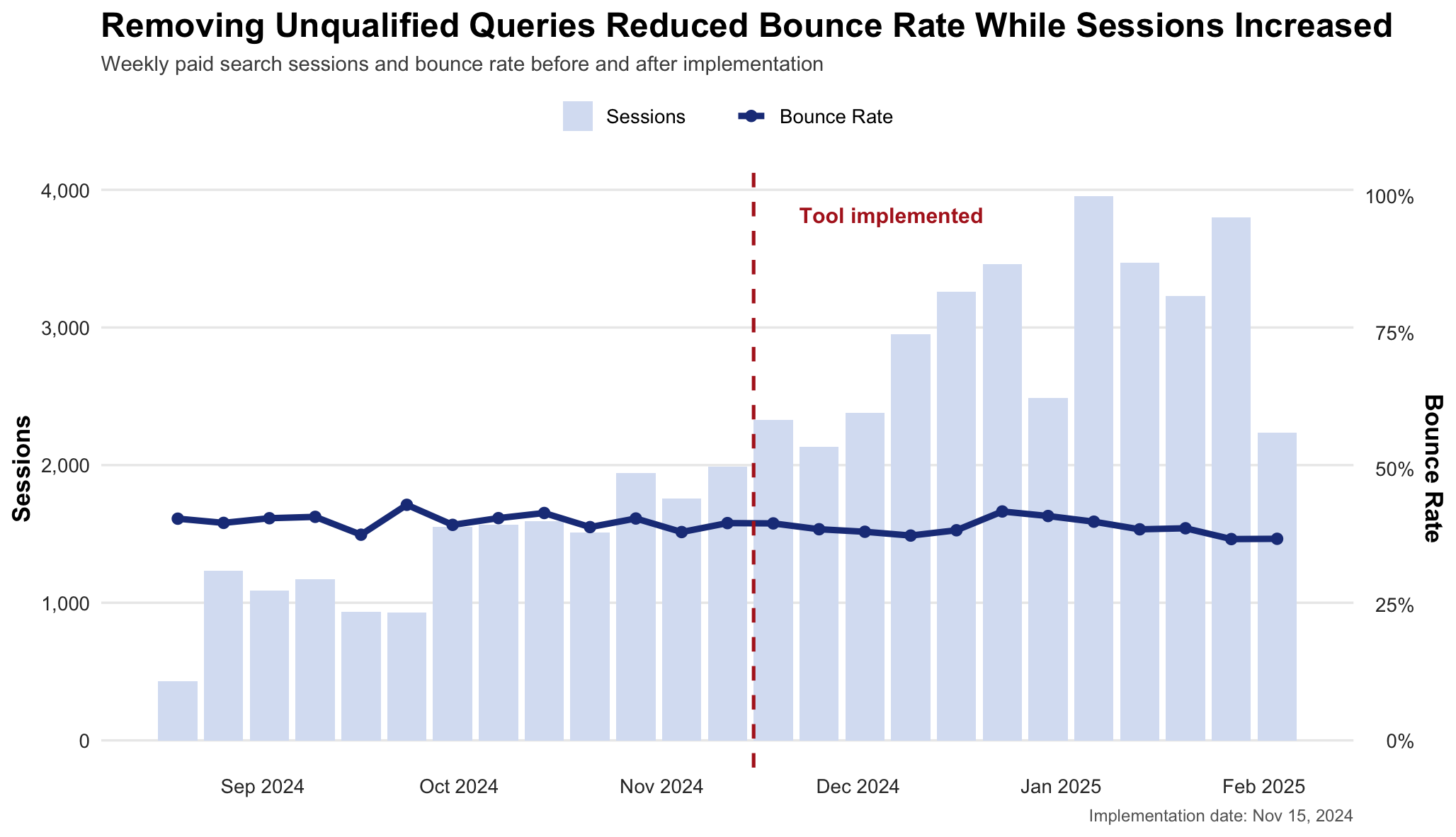

A pre/post analysis conducted around one implementation tells a particularly useful story: bounce rate held flat and improved slightly even as session volume more than doubled. Removing unqualified traffic had a measurable effect on overall traffic quality — at scale, while volume was growing significantly.

Beyond the metrics, the more significant outcome is structural. Paid search optimization now includes a behavioral layer it previously lacked. Unqualified traffic is identified systematically and continuously, not accidentally or one time.

Why This Matters

Most paid search optimization stops at the click. This approach starts there.

By connecting query data to on-site behavior, it becomes possible to identify traffic that no amount of bid optimization or ad copy improvement will ever convert — and remove it before it continues to drain budget.

The methodology is scalable, repeatable, and applicable across industries. If your paid search campaigns are large enough to generate significant query volume, this pattern exists in your data.

Implementation

This methodology is implemented as a reusable dashboard and supported by R and Python scripts.